As part of Azimuth’s AAIM Lab, Software Developer Aaron Crutcher is currently training an algorithm for ground based object detection. Beginning with an open source program, You Only Look Once, Version 3 (YOLOv3), he is re-programming and optimizing the real-time object detection algorithm to identify numerous everyday objects such as streetlights, trains, buses, cars, birds, buildings, etc. in videos, live feeds, or static images. Aaron selected YOLOv3 as a baseline training tool on this effort due to the runtime efficiency and adaptability of the base convolutional neural network (CNN). Aaron has modified the algorithm settings, optimized the hyperparameters, and partitioned large data sets obtained from open source tools to train the algorithm on a large open-source dataset to detect objects on video. By processing the weights through validation, the algorithm becomes more efficient, specified, and the utility of the program is optimized.

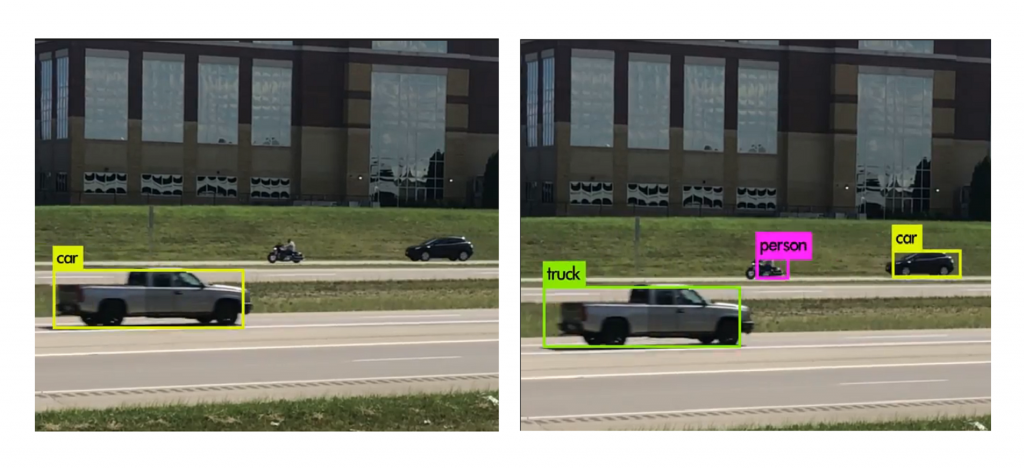

Azimuth’s high performance computing cluster will enable vastly improved detection results. As illustrated by the output below, from a similar multiple GPU system, increased memory capacity and computational power will allow for tighter settings, faster, more efficient training and ultimately, a more accurate algorithm which outclasses the capabilities of a single laptop.

Outcome of Algorithm trained on laptop vs. Outcome of pretrained weights similar to AAIM Lab cluster machine results

The work in training these large data sets will have applicability to future satellite, areal, and ground-based initiatives, allowing Aaron and the AAIM Lab to better train future algorithms and achieve improved detection results. Said detection results could also have applicational use in future knowledge extraction, anomaly detection, and open-source simulation.

Share this Post